‘software’

Component Software. Where is it going?

Friday, January 14th, 2005

Introduction

In 1943 Thomas Whatson, the then chairman of IBM, infamously announced “I think there is a world market for maybe five computers”. This statement seems quite humorous when quoted in the context of today. Not because it is incorrect, there was undoubtedly sense to his reasoning at the time, but because the context of the statement has been so far lost in the progression that has occurred since.

Whatson observed the trends of his day and attempted to predict the direction of future progression. However, he had no way of predicting details or gauging the rate at which that progression would advance.

Similarly, in this essay we will examine the trends of today, and then reflect on how they can be used to predict the trends of the future. Sudipto Ghosh [Ghosh02] stated that “all future software systems will be developed from components”. We’ll look at this and other opinions on the future of component systems and how they affect the cost efficiency of software projects.

The Future of Components lies in Reuse

Component software today is about two simple concepts, reuse and composition. Re-use is a regular topic of conversation between software engineers. We often discuss the merits of abstracting a class so that it can be packaged or wrapped, allowing customers to utilise its functionality directly. However in other branches of engineering you would find little discussion on this or related topics. This is not because reuse is specific to Software Engineering. On the contrary, engineers are expert in selecting and reusing appropriate components in their work. It is the fact that reuse is so commonplace in engineering that makes it, for them, an uncontroversial topic.

Engineers are taught, from their very first lectures, the art of balancing the trade-offs of different components when selecting the most appropriate one for the situation.

Software engineers on the other hand are generally not so good at reuse. Software engineering is still in a “craftsmanship” phase that leads more naturally to rewrite rather than reuse.

The problem is that software is a soft and malleable product that can be moulded into whatever exact shape suits. The question then arises as to whether this perceived advantage of the “softness of software” is really a liability?

One argument, put forward by Ruben Prieto-Diaz [Prieto96], is that the progression of software engineering as a discipline can only really come through the toughening of standards and conventions to impose structure on the pliability of the discipline. He believes that only when software becomes less malleable will reuse, in the forms seen in other engineering disciplines, become practical.

Ruben’s findings still bear much relevance to the evolution and progression of component software today. This issue of the softness of software is still pertinent and, as we shall see, many future developments are geared to restricting the directions in which software can be stretched.

Ruben’s foresight was not only limited to the need for increased structure and standards. He also observed that it is complexity that promotes reuse. His principal states that the more complex a software component the greater the motivation for reusing it (as apposed to rewriting from scratch). This concept points to the inevitability of components within software engineering thus paving the way for the future we see today.

The Future of Components lies in Composition

A different and slightly later view to Ruben’s was put forward by Bennet [Benn00] who considered not only reuse but also the aspect of composition, which is a fundamental contributory element of component software. He notes that over the last half-century software processes have been dominated by managing the complexities of the development and deployment of increasingly sophisticated systems.

Bennett’s view is that there needs to be a shift in the focus of software towards users rather than developers. He states that software development needs to be more demand-centric so as to allow it to be delivered as a service within the framework of an open marketplace. The concept being introduced is known as a Service Based Approach to Software and the analogy he uses is one of selling cars.

Historically cars were sold from pre-manufactured stock but increasingly nowadays consumers configure their desired car from a series of options and only then is the final product assembled. The comparable process in software is to allow users to create, compose and assemble a service, dynamically bringing together a number of different suppliers to meet the consumer’s needs.

The issues imposed by such a proposal lie in the complexities involved in the late binding of software components. Bennet suggests his research will be able to perform binding delayed until the point of execution. This allows customers to select the various components of their systems from a potential variety of vendors and from these components build the customised system of their choice, a concept known as adaptable composition.

These ideas of adaptable composition are extended even further into the future by Howard Shrobe [Shrobe99] in his paper of The Future of Software Technology [Shrobe99]. Shrobe presents an interesting view of the future as one composed of self-adaptive systems that are sensitive to the purposes and goals of the components from which they are composed. Such systems would contain multiple components with similar but slightly disparate roles and the runtime would be able to dynamically determine the most appropriate component for a certain task.

In particular he comments on the long-standing wider research aims to develop tools and methodologies with make impenetrable and properly correct systems. Shobe doubts the usefulness of such methods in future systems. He believes that many of the problems that require such measures arise from the harshness and unpredictability of the environment rather than the mental limitations of programmers.

Instead, he suggests that a range of techniques and tools will emerge that facilitate the construction of inherently self-adaptive systems and goes on to predict some of their features. These will include multiple components being available for any single task. The most appropriate one being selected dynamically by the runtime environment. This is what he calls a Dynamic Domain Architecture. Such architectures are more introspective and reflective that conventional systems. The key elements being:

- Monitors that will check validation conditions are true at various points.

- Diagnosis and isolation services that will determine the cause of exceptional conditions.

- Services will be available that select alternative components to use in the event of failure.

Such systems will need to be, in some ways, self-aware and goal directed. Shobe also foresees the interactions between developers and the system taking the form of a dialogue rather than coding. The developer would offer advice to the system at certain critical points to aid its’ judgement in how to deal with different situations.

Are these futures realistic?

The views of both Bennet and Shrobe are fairly far reaching. Shrobe’s in particular represents a quite extreme vision. However all the ideas so far are grounded in the fundamentals of how component software (and software in general) is developed today.

To see how such views can be considered plausible it is useful to consider the motivations for Component Software expressed by other prominent authors. Clemens Szyperski, one of the fathers of Component Software, explores the motivations for current and future trends in component software in his paper Component Software: What, Where and How? [Szyp02]. Here he divides the motivations for using software components into the four tiers summarised below:

Tier 1: Build Time Composition

Component applications that reside in this tier use prefabricated components in amongst custom development. This drives balance between the competitive advantages of purpose-built software and the economic advantage of standard purchased components. Most importantly components are consumed at development time and released as part of a single custom implementation.

Tier 2: Software Product Lines

Scaling above Tier 1 involves the reuse of partial designs and implementation fragments across multiple products. This is the domain of Software Product Lines [Web1], [Bosch00]. In this tier components are developed for reuse across multiple products. This is similar in some ways to conventional manufacture. An automotive manufacturer may create a variety of unique variations of a single car model. These would be constructed through the use of standard components and production systems that specialise in their configuration and assembly into the various products. A similar concept can be applied to component development and assembly with developers taking roles either as component assemblers or product integrators.

Tier 3: Deployment Composition

In this tier components are integrated as part of the product’s deployment (not at build time). An example of deployment composition is the web browser, which is deployed then subsequently updated with downloaded components that enable specialist functionality on certain web pages. Sun’s J2EE also supports partial composition at deployment time through the use of a deployment descriptor and hence also falls into this category.

Tier 4: Dynamic Upgrade and Extension

In this final tier there are varying degrees of redeployment and automatic installation that facilitate a product that can grow and evolve over its lifetime. This final tier is the realm of current and future research.

What is notable about Szyperski’s tiers is that they are all motivated by financial drivers. Tier1 arises from the competitive advantage gained through reusing prefabricated components over developing them in house. Tier2 results from the forces of an economy scope[1] to extend reuse beyond singular product boundaries and into orchestrated reuse programmes.

In the third and fourth tiers Szyperski switches focus from just reuse to aspects of composition and dynamic upgrade. However the economic motivators here are subtler.

In the third tier they focus on the need for standardisation in a similar vein to that introduced earlier by Prieto-Diaz. Deployment composition generally relies on a framework within which the components operate. This introduces a much-needed discipline to the process as well as offering the opportunity to develop components, which leverage off the framework itself.

The fourth tier supports dynamic upgradeable and extensible structures and represents Syperski’s view on the future of component software. Research into applications in this tier provides an extremely challenging set of problems for researchers, such as validation of correctness, robustness and efficiency.

With this fourth tier architecture Szyperski is pointing towards a future of dynamic composition but also notes that it is one that it will likely be hindered by the problems of compositional correctness. Validating dynamically composed components in a realistic deployment environment is an extremely complex problem simply as a result of the implementation environment not being known at the time of development.

This is an issue of quality assurance. Firstly there is no reliable means to exhaustively test integrations at the component suppliers end. Secondly there are little in the way of component development standards, certifications or best practices that might help increase consumer confidence in software components by guaranteeing the reliability of vended components.

David Garlan [Gar95] illustrated similar issues a decade ago in the domain of static component assembly. Garlan noted problems with low-level interoperability and architectural mismatch resulting from incompatibilities between the components he studied. Issues such as “which components hold responsibility for execution” or “what supporting services are required” are examples of problems arising from discrepancies in the assumptions made by component vendors.

Garlan listed four sets of improvements which future developments must incorporate to overcome the problems of interoperability and architectural mismatch:

- Make architectural assumptions explicit.

- Construct large pieces of software using orthogonal sub-components.

- Provide techniques for bridging mismatches

- Develop sources of architectural design guidance.

Whilst these issues were observed when considering static composition (i.e. within Szyperski’s first Tier) the same issues are applicable to higher tiers too. Approaches to remedying these issues have been suggested on many levels. One approach is to provide certification of components so that consumers have some guarantee of the quality, reliability and the assumptions made in their construction. Voas introduced a method to determine whether a software component can negatively affect an utilising system [Voas97].

The same concept has been taken further at the Software Engineering Institute (SEI) at Caregie Mellon with a certification method known as Predictable Assembly from Certifiable Components or PACC [Web2]. Instead of simple black box tests PACC allows component technology to be extended to achieve predictable assembly using certified components. The components are assessed though a validation framework that measurers statistical variations in various component parameters (such as connectivity and execution ranges). This in turn allows companies greater confidence in the reliability of the components they assemble.

Szyerski also alludes to a similar conclusion:

“Specifications need to be grounded in framework of common understanding. At the root is a common ontology ensuring agreed upon terminology and domain concepts.” [Szyper02].

He suggests the solution of a specification language, AsmL, which shares some similarities with PACC. AsmL, which is based on the concept of Abstract State Machines [Gure00], is a means for capturing operational semantics at a level of abstraction that fits in with the process being modelled. Put another way it allows the formalisation of the operations and interactions of the components that it describes in a type of an overly rich interface description. This in turn allows processes to be specified and validated with automated test case generators thus providing verification and correctness by construction.

AsmL has been applied on top of Microsoft’s .NET CLR by Mike Barnet et al. [Barn03] with some successes made in specifying and verifying correctness of composed component systems. In Barnet’s implementation the framework is able to provide notification that components do not meet the required specification (along similar lines to that suggested by Shrobe) but is as yet unable to provide automated support or actually pinpoint the reason for the failure.

Keshava Reddy Kottapally [Web3] presents a near and far future view of component software as being influenced by the development of Architectural Description Languages (ADL’s). These ADL’s focus on the high level structure of the overall application rather then implementation details and again arise from similar concepts to those suggested by Szyperski. Physically they provide specification of a system in terms of components and their interconnections i.e. they describe what a component needs rather than what it requires.

Kottapally’s near future view revolves around adaptation of the currently prominent component architectures (.NET, J2EE, CORBA) to incorporate ADL’s. He gives the example that ADL files would be built with Builder tools designed specifically for ADL specification. Then interfaces such as CORBA IDL could be generated automatically once the ADL file is in place. The purpose being to facilitate connection orientated implementations where the connections can handle different data representations. This would be enabled via bridges between interoperability standards (e.g. a CORBA EJB Bridge).

He also suggests a unified move to the new challenges proposed by COTS based development. COTS-Based Systems focus on improving the technologies and practices used for assembling prefabricated components into large software systems [COTS04], [Voas98]. This approach attempts to realign the focus of software engineering from the traditional linear process of system specification and construction to one that considers the system contexts such as requirements, cost, schedule, operating and support environments simultaneously.

Kottapally continues to present a more far-reaching view on the future of CBSD. In particular he highlights several developments he feels are likely to become important:

- The removal of static interfaces to be replaced by architectural frameworks that deal with name resolution via connectors.

- Resolution of versioning issues.

- General take up of COTS

- Traditional SE transforms to CBSD.

- Software agents will represent human beings acquiring intelligence and travelling in the global network using component frameworks and distributed object technologies.

Components are Better as Families

So far we have seen evidence that the future of component software is likely to be grounded in the issues that facilitate both the static and dynamic composition within software products. We have also seen that some efforts have already been made to increase the rigidity of the environments in which these products operate thus allowing compositions to become more reliable. However there is another set of views on how we achieve these truly composable systems that originate from a slightly different tack.

Greenfield et al [SoftFact] foresee a more systematic approach to reuse arising from the integration of several critical innovations to produce a process akin to the industrialisations observed in other industries. This goes somewhat beyond the realm of Component Software and considers issues such as the development of domain specific languages and tools to reduce the amount of handwritten code. However they do express several interesting opinions on the application of component software in their vision of the future.

Greenfield et al make two statements in particular that encapsulate what they feel to be the most critical developments in component software:

- “Building families of similar but distinct software products to enable a more systematic approach to reuse”.

- “Assembling self-describing service components using new encapsulation, packaging, and orchestration technologies”.

The first point refers to the systematic approaches, such as Software Product lines that were introduced earlier. Studies have shown [Clem01] that the applications of Software Product Line principals allow levels of reuse in excess of two thirds of the total utilised source (a level that would be difficult to achieve through regular component assembly methods).

Greenfield puts forward the view that the environment of software development will be fundamentally changed by the introduction of such high levels of reuse. This in turn will induce the arrival of software supply chains.

Supply chains are a chain of states with raw materials at one end and a finished product at the other. The intermediate steps involve participants combining products from upstream suppliers, adding value then passing them on down the chain. Greenfield claims that the introduction of supply chains will act as a force to standardise. Something observed as a necessity by most authors on the subject of software component evolution.

Greenfield’s second point, listed above, refers to the concept of Self-Description. Self-Description allows components to describe the assumptions, dependencies and behaviour that are intrinsic to their execution, thus providing operational as well as contractual data. This level of meta-data will allow a developer or even a system itself to reason about the interactions between components.

This idea is extended further via the extension of modelling languages, such as UML, to a level that allows them to describe development rather than just providing documentation of the development process. In such a vision the modelling language forms an integral part of the deployment.

There are similarities here to the concept of AsmL put forward by Szyerski earlier. In addition Greenfield, like Szyerski, also emphasises the need for executing platforms to proceed to higher levels of abstraction:

“Together these lead to the prospect of an architecturally-driven approach to model-driven development of product families”. ([SoftFact] p452)

It is also interesting to note that the concept of self-description follows on logically from the points Garlan made earlier regarding architectural assumptions being explicit and the bridging of architectural mismatches.

So what of the future?

Components are primarily designed for composition. One of the main attractions of any component-based solution is the ability to compose and recompose the solution using products from potentially different vendors. We have seen examples of issues with static composition raised over a decade ago [Gar95] and the same issues are pointed out time and time again ([Szyp02], [GSCK04], [Voas97], [Web3], [SzypCS]). We have seen solutions suggested including self-description and ADL’s. However one of the main aims is to produce agile software constructions and this includes the ability to compose systems dynamically, even at runtime.

Whether these visions actually come into being is difficult to say. It is certainly true that the interactions in these structures are increasingly complex and that already there are observable tradeoffs to be made by developers with respect to performance versus compositional variance (as highlighted currently with frameworks such as Suns J2EE). In the next section we will consider the financial implications of component technologies and attempt to determine whether they actually provide practical cost benefits for consumers both now and in the future.

Are Component Technologies Cost Effective?

Szyperski’s four motivational tiers that were introduced earlier coupled with the fact that each increasing tier requires more refined competencies leads to the concept of a Component Maturity Model [Szyp02]. The levels are distinguished as:

1. Maintainability: Modular Solutions.

2. Internal Reuse: product lines.

3a. Closed Composition: make and buy from a closed pool of organisations

3b. Open Composition: make and buy from open markets

4. Dynamic Upgrade

5. Open and Dynamic

To consider the cost effectiveness of component software it is convenient to consider the financial drivers within each of these levels.

Level 1. Maintainability: Modular Solutions.

At this level components are produced in house and reused within a project. The aim from an economic standpoint is to reduce costs by promoting reuse. From a development position the “rule of thumb” is that a component becomes cost effective once it has been reused three times [SzypCS]. This property emerges from the trade off between the cost of redeveloping a component when it is needed against the increased initial cost of an encapsulated and reusable solution. This relationship is shown in fig 1.

Make architectural assumptions explicit

Economic returns are generally increased further when maintenance costs are also considered due to the lower maintenance burden of a single (if slightly larger) source object.

Level 2. Internal Reuse: Product Lines

Internal reuse in the form of product lines, as introduced earlier, involves reusing internally developed components across a range of similar products within a product line. The economic impact is multifaceted. Product lines increase efficiency as they dramatically increase the level of component reuse that can be sustained in a development cycle. However these rewards reaped from the cross asset utilisation of shared components must be offset against the increased managerial and logistical stresses imposed by such an interdependent undertaking.

Level 3a/b. Closed Composition:

Make and buy from a closed/open market of organisations

We have seen that there is significant evidence to suggest economic advantage from the use of modular development. The economic advantages of reuse in an OO sense are compulsive and this fact alone was a major factor in the success of the object-orientated revolution of the end of the last century. However it is when this concept is extended to reuse across company boundaries that the economic benefits become really interesting.

Component reuse offers the potential for dramatic savings in development costs if executed successfully. Never before has the concept of non-linear productivity been on offer to software organisations. Quoting Szyperski [SzypCS]:

“As long as solutions to problems are created from scratch [i.e. regular development], growth can be at most linear. As components act as multipliers in a market, growth can become exponential. In other words, a product that utilises components benefits from the combined productivity and innovation of all component vendors”.

The use of prefabricated components offers the potential to compose hugely complex software constructions at a fraction of their development cost simply by purchasing the constituent parts and assembling them to form the desired product. It is this promise of instant competitive advantage, which makes the use of components so compulsive, and it is this that makes them truly cost effective.

In fact the dynamics of a software market fundamentally changes when components are introduced. When a certain domain becomes large enough to support a component market of sufficient size, quality and liquidity the creation of that market becomes inevitable. The adoption of components by software developers then becomes a necessity. Standard solutions are forced to utilise these components in order to keep up with competitors. At this point competitive advantage can then only be achieved by adding additional functionality to that offered by the composition of available components within the software market.

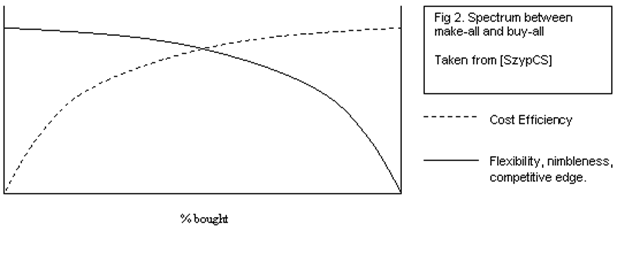

The important balance to consider is one between the flexibility, nimbleness and competitive edge provided by regular programming and the cost efficiencies provided by reusing prefabricated components. This relationship is shown in fig 2.

This concept of development by assembly was in fact one of the important changes promulgated by the industrial resolution. The advent of assembly lines marked the transition from craftsmanship to industrialisation. The analogy is useful when considering software development to also be in a period of craftsmanship and hence inferring that taking the same steps will bring industrialisation to the software industry. However a number of subtle differences have manifested themselves that have resulted in little of the predicted revolution in component utilisation actually taking place.

This slowness in take up can be attributed to a number of factors:

- Lack of liquidity in component markets: Many markets lack liquidity or companies fail to address the difficult marketing issues provided by an immature market such as component software.

- Integration issues such as platform specific protocols.

- Lack of transparency in component solutions and weak packaging. Black box solutions often hide true implementation details and documentation can be weak.

- Reliability issues. Black/Glass box solutions can prove problematic for customers as minor inaccuracies in product specification can prove challenging or impossible to fix.

- Raising issues back to the vendor is rarely a practical solution.

- The “not invented here” syndrome. Suspicion of vendor components leads to the dominance of in-house construction. In addition components that are used are often only applied in opportunistic manners rather than as an integrated part of the design.

Points 3, 4 and 5 represent the major differences between closed pool and open market acquisition. The closed pool allows companies greater confidence in the component manufacture through the building of a mutually beneficial relationship between client and vendor. However the reduction in breadth of components available restricts the opportunity for full leverage from the component market at large.

Level 4+5. Open and Dynamic Upgrade:

The efficiency of dynamic upgrade is easy to judge as what technology is currently implelmentable is of too unreliable a form to be efficient. However future applications of dynamic upgrade are likely to appear in performance orientated environments that can reap large benefits from the extra flexibility offered. Applications such as mobile phone routing are potential candidates where the opportunity to dynamically switch in and out encapsulated components in a hot system is highly valued due to the avoidance of down time.

Conclusions

So is component software cost efficient? The answer to this question, as with many, lies in the context in which it is asked. The efficiency of component software varies according the maturity level at which it is applied. At lower levels economic benefits arise from reuse as part of the development process. This has a significant if not exceptional effect on efficiency.

As utilisation moves to a level that consumes vendor components, the potential for economic advantage increases dramatically. Companies at this maturity level can achieve exponential product growth. Hence, in answer to the question posed, component software provides the possibility for substantial increases in cost efficiency. But this potential is, as yet, unrealised in most software markets. This lack of take up of component software can be traced to two specific and interdependent aspects:

On one side is the ideology of software engineering itself. Software engineers are brought up to develop software rather than assemble components. It is only natural that they should favour the comforts of an environment they are familiar with over the foreboding challenges imposed by the world of assembly.

On the other hand there are significant problems with the components of today resulting from issues of their implementation in general, which makes them hard to use.

As we look to the future, and component markets mature, it is likely that the issues of integration highlighted earlier in the paper will be resolved. This in turn should induce closer relationships between customers and suppliers, strengthening the process as well as increasing confidence in assembly as a practical and reliable methodology for industrial application construction.

But the future is a hard thing to predict. The world contains a substantially larger number of computers than Whatson originally predicted. This does not mean Whatson was wrong though. It simply means it has not, yet, been proved right. It is entirely possible that we may end up with a world containing only a handful of computers, depending of course, on your definition of a computer. The reality though is that, when it comes to the future, the only truly accurate option is to simply wait and see.

References

[Barn03] Barnet et al: Serious Specification for Composing Components 6th ICSE Workshop on Component-Based Software Engineering

[Benn00] Service-based software: the future for flexible software, K. Bennett, P. Layzell, D. Budgen, P. Brereton, L. Macaulay, M. Munro: Seventh Asia-Pacific Software Engineering Conference (APSEC’00)

[Bosch00] j. Bosch: Design and use of software architectures: Adopting and evolving a product line approach. Addison Wisley 2000

[Clem01] Software Product Lines: Practices and Patterns: Clements and Northrop

[COTS04] http://www.sei.cmu.edu/cbs/overview.html

[Gar95] David Garlan: Architectural Mismatch of Why it’s hard to build a system out of existing parts.

[Ghosh02] “Improving Current Component Development Techniques for Successful Component-Based Software Development,” S. Ghosh. 7th International Conference on Software Reuse Workshop on Component-based Software Development Processes, Austin, April 16, 2002.

[GSCK04] Software Factories: Greenfield, Short, Cook and Kent. Wiley 2004

[Gure00] Y. Gurevich: Sequential Abstract State Machines Capture Sequential Algorithms: ACM Transactions on Computational Logic.

[Pour98] Gilda Pour: Moving Toward Component-Based Software Development Approach 1998 Technology of Object-Oriented Languages and Systems

[Prieto96] Ruben Prieto-Diaz: Reuse as a New Paradigm for Software Development. Proceeding of the International Workshop on Systematic Reuse. Liverpool 1996.

[Shrobe99] Howard Shrobe, MIT AI Laboratory, Software Technology of the Future 1999 IEEE Symposium on Security and Privacy

[Szyp02] Clemens Szyperski: Component Technology – What, Where and How?

[SzypCS] Clemens Szyperski: Component Software – Beyond Object-Orientated Programming. Second Edition Addison-Wesley

[Voas97] Jeffrey Voas: An approach to certifying off-the-shelf software components 1997

[Voas98] Jeffery Voas: The Challenges of Using COTS Software in Component-Based Development (Computer Magasine)

[Web1] http://www.softwareproductlines.com/

[Web2] http://www.sei.cmu.edu/pacc

[Web3] Keshava Reddy Kottapally: ComponentReport1: http://www.cs.nmsu.edu/~kkottapa/cs579/ComponentReport1.html

[1] Software is subject to the forces of an economy of scope rather than and economy of scale. Economies of scale arise when copies of a prototype can be mass-produced at reduced cost via the same production assets. Such forces do not apply to software development where the cost of producing copies is negligible. Economies of scope arise when production assets are reused but to produce similar but disparate products.

Do Metrics Have a Place in Software Engineering Today?

Sunday, March 14th, 2004

Introduction

The famous British physicist Lord Kelvin (1824-1904) once commented:

“When you can measure what you are speaking about, and express it in numbers, you know something about it; but when you cannot measure it, when you cannot express it in numbers, your knowledge is of a meagre and unsatisfactory kind. It may be the beginning of knowledge, but you have scarcely, in your thoughts, advanced to the stage of science.”

This statement, when applied to software engineering, reflects harshly upon the software engineer that believes themselves to really be a computer scientist. The fundamentals of any science lie in its ability to prove or refute theory through observation. Software engineering is no exception to this yet, to date, we have failed to provide satisfactory empirical evaluations of many of the theories we hold as truths.

I take the view that comprehensibility should be the main driver behind software design, other than satisfying business and functional requirements, and that the route to this goal lies in minimisation of code complexity. Software comprehension is an activity performed early in the software development lifecycle and throughout the lifetime of the product and hence it should be monitored and improved during all phases. In this paper I will reflect specifically on methods through which software metrics can aid the software development lifecycle through their ability to measure, and allow us to reason about, software complexity.

Kelvin says that if you cannot measure something then your knowledge is of an unsatisfactory kind. What he is most likely alluding to in this statement is that any understanding that is based on theory but lacks qualitative support is inherently subjective. This is a problem prevalent within our field. Software Engineering contains a plethora of self-appointed experts promoting their own, often unsubstantiated, views. Any scientific discipline requires an infrastructure that can prove or refute such claims in an objective manner. Metrics lie at the essence of observation within computer science and are therefore pivotal in this aim.

In the conclusion to this paper I reflect on the proposition that metrics are more than just a way of optimising system construction, they provide the means for measuring, reasoning about and validating a whole science.

Measuring Software

Software measurement since its conception in the late 1960’s has striven to provide measures on which engineers may develop the subject of Software Engineering. One of the earliest papers on software metrics was published by Akiyama in 1971 [8].

Akiyama attempted to use metrics for software quality prediction through a crude regression based model that measured module defect density (number of defects per thousand lines of code). In doing this he was one of the first to attempt the extraction of an objective measure of software quality through the analysis of observables of the system. To date defect counts form one of the fundamental measurements of a software system (although a general distinction between pre and post release defects is usually made).

In the following years there was an explosion of interest in software metrics as a means for measuring software from a scientific standpoint. Developments such as Function Point measures pioneered in 1979 by Albrecht [17] are a good example. The new field of software complexity also gained a lot of interest, largely pioneered by Halstead and McCabe.

Halstead proposed a series of metrics based on studies of human performance during programming tasks [11]. They represent composite, statistical measures of software complexity using basic features such as number of operands and operators. Halstead performed experiments on programmers that measured their comprehension of various code modules. He validated his metrics based on their performance.

McCabe presented a measure of the number of linearly independent circuits through the program [10]. This measure aims specifically to gauge the complexity within the software resulting from the number of distinct routes through a program.

The advent of Object Orientation in the 1990’s saw a resurgence of interest as researches attempted to measure and understand the issues of this new programming paradigm. This was most notably pioneered by Chidamber and Kemerer [2] who wrapped the basic principals of Object Orientated software construction in a suite of metrics that aim to measure the different dimensions of software.

This metrics suite was investigated further by Basili and Briand [25] who provided empirical data that supported their applicability as measures of software quality. In particular they note that the metrics proposed [2] are largely complementary (see later section on metrics suites).

These metrics not only facilitate the measurement of Object Orientated systems but also lead to the development of a conceptual understanding of how these systems act. This is particularly notable with metrics like Cohesion and Coupling which a wider audience now considers as basic design concepts rather than just software metrics. However questions have been raised over their correctness from a measurement theory perspective [26,27,30] and as a result optimizations have been suggested [31].

A second complimentary set of OO metrics was proposed by Abreu in 1995 [32]. This suite, denoted the Mood Metrics Set, encompasses similar concepts to Chidamber and Kemerer but from a slightly different, more system wide, viewpoint on the system.

To date there are over 200+ documented software metrics designed to measure and assess different aspects of a software system. Fenton [12] states that the rationale of almost all individual metrics for measuring software has been motivated by one of the two activities: –

1. The desire to assess or predict the effort/cost of development processes.

2. The desire to assess or predict quality of software products.

When considering the development of proper systems, systems that are fit for purpose, the quality aspects in Fenton’s second criteria, in my opinion, outweigh those of cost or effort prediction. Software quality is a multivariate quantity and its assessment cannot be made by any single metric [12]. However one concept that undoubtedly contributes to software quality is the notion of System Complexity. Code complexity and its ensuing impact on comprehensibility are paramount to software development due to its iterative nature. The software development process is cyclical with code often being revisited frequently for maintenance and extension. There is therefore a clear relationship between the costs of these cycles and the complexity and comprehensibility of the code.

There are a number of attributes that drive the complexity of a system. In Software Development these include system design, functional content and clarity. To determine whether metrics can help us improve the systems that we build we must look more closely at Software Complexity and what metrics can or cannot tell us about its underlying nature.

Software complexity

The term ‘Complexity’ is used frequently within software engineering but often when alluding to quite disparate concepts. Software complexity is defined in IEEE Standard 729-1983 as: –

“The degree of complication of a system or system component, determined by such factors as the number and intricacy of interfaces, the number and intricacy of conditional branches, the degree of nesting, the types of data structures, and other system characteristics.”

This definition has widely been recognized as a good start but lacking in a few respects. In particular it takes no account of the psychological factors associated with the comprehension of physical constructs.

Most software engineers have a feeling for what makes software complex. This tends to arise from conglomerate of different concepts such as coupling, cohesion, comprehensibility and personal preferences. Dr. Kevin Englehart [19] divides the subject into three sections: –

– Logical Complexity e.g. McCabes Complexity Metric

– Structural Complexity e.g. Coupling, Cohesion etc..

– Psychological/Cognitive/Contextual Complexity e.g. comments, complexity of control flow.

Examples of logical and structural metrics were discussed in the previous section. Psychological/Cognitive metrics have been more of a recent phenomenon driven by the recognition that many problems in software development and maintenance stem from issues of software comprehension. They tend to take the form of analysis techniques that facilitate improvement of comprehension rather than actual physical measures.

The Kinds of Lines of Code metric proposed in [28] attempts a measure cognitive complexity through the categorization of code comprehension at its lowest level. Analysis with this metrics gives a measure of the relative difficulty associated with comprehending a code module. This idea was developed further by Rilling et al [33] with a metric called Identifier Density. This metric was then combined with static and dynamic program slicing to provide a complementary method for code inspection.

Consideration of the more objective, logical and structural aspects of complexity is still a hugely challenging task, due to the number of factors that contribute to the overall complexity of a software system. In this paper I consider complexity to comprise all three of the aspects listed above but note that there is a base level associated with any application at any point in time. The complexity level can be optimized to refractor sections that are redundant or accidentally complex but a certain level of functional content will always have a corresponding base level of complexity.

Within research there has been, for some, a desire to identify a single metric that encapsulates software complexity. Such a consolidated view would indeed be hugely beneficial, but many researchers feel that such a solution is unlikely to be forthcoming due to the overwhelming number of, as yet undefined, variables involved. There are existing metrics that measure certain dimensions of software complexity but they do so often only under limited conditions and there are almost always exceptions to each. The complex relationships between the dimensions, and the lack of conceptual understanding of them, adds additional complication. George Statks illustrates this point well when he likens Software Complexity to the study of the weather.

“Everyone knows that today’s weather is better or worse than yesterdays. However, if an observer were pressed to quantify the weather the questioner would receive a list of atmospheric observations such as temperature, wind speed, cloud cover, precipitation: in short metrics. It is anyone’s guess as to how best to build a single index of weather from these metrics.”

So the question then follows: If we want to measure and analyze complexity but cannot find direct methods of doing so, what alternative approaches are likely to be most fruitful for fulfilling this objective?

To answer this question we must fist delve deeper into the different means by which complex systems can be analyzed.

Approaches to Understanding Complex Systems

There are a variety of methods for gathering understanding about complex systems that are employed in different scientific fields. In the physical sciences systems are usually analyzed by breaking them into their elemental constituent parts. This powerful approach, known as Reductionism, attempts to understand each level in terms on the next lower level in a deterministic manner.

However such approaches become difficult as the dimensionality of the problem increases. Increased dimensionality promotes dynamics that are dominated by non-linear interactions that can make overall behaviour appear random [20].

Management science and economics are familiar with problems of a complex, dynamic, non-linear and adaptive nature. Analysis in these fields tends to take an alternative approach in which rule sets are derived that describes particular behavioural aspects of the system under analysis. This method, known as Generalization, involves modelling trends from an observational perspective rather than a Reductional one.

Which approach should be taken, Reductionism or Generalization, is decided by whether the problem under consideration is deterministic. Determinism implies that the output is uniquely determined by the input. Thus a prerequisite for a deterministic approach is that all inputs can be quantified directly and that all outputs can be objectively measured.

The main problem in measuring the complexity of software through deterministic approaches comes from difficulty in quantifying inputs due to the sheer dimensionality of the system under analysis.

As a final complication, software construction is a product of human endeavour and as such contains sociological dependencies that prevent truly objective measurement.

Using metrics to create multivariate models

To measure the width of this page you might use a tape measure. The tape measure might read 0.2m and this would give you an objective statement which you could use to determine whether it might fit it in a certain envelope. In addition the measurement gives you a conceptual understanding of the page size.

Determining whether it is going to rain is a little trickier. Barometric pressure will give you an indicator with which you make an educated guess but it will not provide a precise measure. Moreover it is difficult to link the concept of pressure with it raining. This is because the relationship between the two is not defining.

What is really happening of course is that pressure is one of the many variables that together contribute to rainfall. Thus any model that predicts weather will be flawed if other variables, such as temperature, wind speed or ground topologies are ignored.

The analysis of Software Complexity is comparable to this pressure analogy in that there is disparity between the attributes that we can currently measure, the concepts that are involved and the questions we wish answered.

Multivariate models attempt to combine as many metrics as are available in a way that maximizes the dimension coverage within the model. They also can examine the dependencies between variables. Complex systems are characterized by the complex interactions between these variables. A good example is the duel pendulum which, although being only comprised of two single pendulums, quickly falls into a chaotic pattern of motion. Various multivariate techniques are documented that tackle such interdependent relationships within software measurement. They can be broadly split into two categories:

1. The first approach notes that it is the dependencies between metrics that form the basis for complexity. Thus examination of these relationships provides analysis that is deeper than that created with singular metrics as it describes the relationship between metrics. Halstead’s theory of software science [2] is probably the best-known and most thoroughly studied example of this.

2. The second set is more pragmatic about the issue. They accept that there is a limit to what we can measure in terms of physical metrics and they suggest methods by which those metrics available can be combined in a way that maximizes benefit. Fenton’s Bayesian Nets [4] are a good example of this although their motivation is more heavily focused on the prediction of software cost than the evaluation of its quality.

Metrics suites

One of the popular methods for dealing with the multi dimensionality of complexity is by associating different metrics within a metrics suite. Methods such those discussed in [13], [14] follow this approach. The concept is to select metrics that are complementary and together give a more accurate overview of the systems complexity that each individual metric would alone.

Regression Based and stochastic models

The idea of combining metrics can be extended further with regression-based models. These models use statistical techniques such as factor analysis over a set of metrics to identify a small number of unobservable facets that give rise to complexity.

Such models have had some success. In 1992 Borcklehurst and Littlewood [21] demonstrated that a stochastic reliability growth model could produce accurate predictions of the reliability of a software system providing that a reasonable amount of failure data can be collected.

Models like that produced by Stark and Lacovara [15] use factor analysis with standard metrics as observables. The drawback of these methods is that the resulting models can be difficult to interpret due to their “black box” analysis methodologies. Put another way; the methods by which they analyze cannot be attributed to a causal relationship and hence their interpretation is more difficult.

Halstead [23] presented a statistical approach that looks at total number of operators and operands. The foundation of this measure is rooted in information theory – Zipf’s laws of natural languages, and Shannon’s information theory. Good agreement has been found between analytic predictions using Halstead’s model and experimental results. However, it ignores the issues of variable names, comments, choice of algorithms or data structures. It also ignores the general issues of portability, flexibility and efficiency.

Causal Models

Fenton [12] suggests an alternative that a uses a causal structure of software development which makes the results much easier to interpret. His proposal utilizes Bayesian Belief Networks. These allow those metrics that are available within a project to be combined in a probabilistic network that maps the causal relationships within the system.

These Bayesian Belief Nets also have the added benefit that they include estimates of the uncertainly of each measurement. Any analytical technique that attempts to provide approximate analysis must also provide information on the accuracy of the results and this is a strong benefit with this technique.

Successes and Failures in Software Measurement

In spite of the advances in measurement presented by the various methods discussed above there are still problems evident in the field. The disparity between research into new measurement methods and their uptake in industrial applications highlight these problems.

There are 30+ years of research into software metrics and far in excess of 200 different software metrics available yet these have barely penetrated the mainstream software industry. What has been taken up also tends to be based on the many of the older metrics such as Lines of code, Cyclometric Complexity and Function points which where all developed in or before the 1970’s.

The problem is that prospective users tend to prefer the simpler, more intuitive metrics such as lines of code as they involve none of the rigmarole of the more esoteric measures [12]. Many metrics and consolidation processes lack strong empirical backing or theoretical frameworks. This leaves users with few compelling motivations for adopting them. As a result these new metrics rarely appear any more reliable than their predecessors and are often difficult to digest. These factors have contributed to their lack of popularity.

However metrics implemented in industry are often motivated by different drivers to those of academia. Their utilization is often motivated by a desire to increase certification levels (such as CMM [22]). They are sometimes seen as something used as a last resort for projects that are failing to hit quality or cost targets. This is quite different from the academic aim of producing software of better quality or rendering more effective management.

So can metrics help us build better systems?

Time and cost being equal and business drivers aside, the goal of any designer is to make their system easy to understand, alter and extend. By maximizing comprehensibility and ease of extension the designer ensures that the major burden in any software project, the maintenance and extension phases are reduced as much as possible.

In a perfect word this would be easy to achieve. You would simply take your “complexity ruler” and measure the complexity of your system. If it was too complex you might spend some time improving the design.

However, as I have shown, there is no easily achievable “complexity ruler”. As we have seen software complexity extends into far more dimensions that we can currently model with theory, not to mention accurately measure.

But nonetheless, the metrics we have discussed give useful indicators for software complexity and as such are a valuable tool within the development and refactoring process. Like the barometer example they give an indicator of the state of the system.

Their shortcomings arise from the fact that they must be used retrospectively when determining software quality. This fact arises as metrics can only provide information after the code has been physically put in place. This is of use if you are a manager in a large team trying to gauge the quality of the software coming from the many developers you may oversee. It is less useful when you are trying to prevent the onset of excessive or accidental complexity when designing a system from scratch. Reducing complexity through refactoring retrospectively is known to be far more expensive that a pre-emptive design. Thus a pre-emptive measure of software complexity that could be integrated at design time would be far more attractive.

So my conclusion must be that current complexity metrics provide a useful, if somewhat limited, tool for analysis of the system attributes but are, as yet, not really applicable to earlier phases of the development process.

The role of Metrics in the Validation of Software Engineering

There is another view, that the success of metrics for aiding the construction of proper software lies not in their ability to measure software entities specifically. Instead it is to provide a facility that lets us reason objectively about the process of software development. Metrics provide a unique facility through which we can observe software. This in turn allows us to validate the various processes. Possibly the best method for reducing complexity from the start of a project lies not in measurement of the project itself but in the use of metrics to validate the designs that we wish to employ.

Through the history of metrics development there has been a constant oscillation between the development of understanding of the software environment and its measurement. There are few better examples of this than the measurement of object orientated methods where the research by figures like Chidamber, Kemerer, Basili, Abreu and Briand lead not only to the development of new means of measurement but to new understanding of the concepts that drive these systems.

Fred S Roberts said, in a similar vein to the quote that I opened with:

“A major difference between a “well developed” science such as physics and some other less “well developed” sciences such as psychology or sociology is the degree to which they are measured.”

Software metrics provide one of the few tools available that allow the measurement of software. The ability to observer and measure something allows you to reason about it. It allows you to make conjectures that can be proven. In doing so something of substance is added to the field of research and that knowledge in turn can provide the basis for future theories and conjectures. This is the process of scientific development.

So as a final response to the question posed, software metrics have application within development but I feel that their real benefit lies not in the measurement of software but in the validation of engineering concepts. Only by substantiating the theories that we employ within software development can we attain a level of scientific maturity that facilitates true understanding.

References

[1] Startk and Lacovara; On the calculation of relative complexity measurement.

[2] S. R. Chidamber , C. F. Kemerer : A Metrics Suite for Object Oriented Design

[3] The Goal Question Metric Approach: V. Basili, G Caldiera, H Rombach

[4] Fenton NE, Software Metrics, A Rigorous Approach, 1991

[5[ Briand, Morasca, Basili: Property-Based software engineering measurement, IEEE Transactions on Software

Engineering 1996.

[6] Zuse H: Software Complexity, Measures and Methods 1991

[7] Bache, Neil: Introducing metrics into industry: a perspective on GQM, 1995

[8] Akiyama F: An example of software system debugging 1971

[9] History of Software Measurement by Horst Zuse (<http://irb.cs.tu-berlin.de/~zuse/metrics/History_02.html>)

[10] T McCabe: A Complexity Measure, IEEE Transactions in Soft Engineering Dec 1976

[11] M.H. Halstead: On Software Physics and GM’s PL.I Programs, General Motors Publications 1976

[12] Fenton NE, Software Metrics, A Roadmap, 1991

[13] Nagapan, Williams, Vouk, Osborne: Using In Process Testing Metrics to Estimate Software Reliability.

[14] Valerdi, Chen, and Yang: System Level Metircs for Software development

[15] G. Stark, L Robert on the Calculation of Relative Complexity Measurement

[16] Fenton NE, A critique of software defect prediction models 1999

[17] Albrecht: Measuring application development 1979

[18] David Garland – Why it is hard to build systems out of existing parts.

[19] CMPE 3213 – Advanced Software Engineering (http://www.ee.unb.ca/kengleha/courses/CMPE3213/Complexity.htm)

[20] Ben Goertzel – The Faces of Psychological Complexity

[21] Littlewood B, Brocklehurst S, “New ways to get accurate reliability measures”, IEEE Software, vol. 9(4), pp. 34-42,

1992.

[22] Capability Maturity Model for Software – <http://www.sei.cmu.edu/cmm/>

[23] Halstead, M., Elements of Software Science, North Holland, 1977.

[24] Klemola, Rilling: CA Cognitive Complexity Metric Based On Category Learning

[25] Victor R. Basili, Lionel C. Briand, Walcelio L. Melo: A Validation of Object-Oriented Design Metrics as Quality

Indicators

[26] Neville I. Churcher, Martin J. Shepperd: Comments on ‘A Metrics Suite for Object Oriented Design

[27] Graham, I: Making Progress in Metrics

[28] Klemola, Rilling: A Cognitive Complexity Metric Based on Category Learning

[29] Bandi, Vaishnave, Turk: Predicting Maintenance Performance Using Object-Orientated Design Complexity Metrics.

[30] Rachel Harrison, Steve J. Counsell, Reuben V. Nithi: An Evaluation of the MOOD Set of Object-Oriented Software

Metrics

[31] S Counsell, E Mendes, S Swift: Comprehension of Object-Oriented Software Cohesion: The Empirical Quagmire

[32] Abreu: The MOOD Metrics Set.

[33] Rilling, Klemola: Identifying Comprehension Bottlenecks Using Program Slicing and Cognitive Complexity MetricsReferences