‘Distributed Data Storage’

Database Y

Friday, November 22nd, 2013

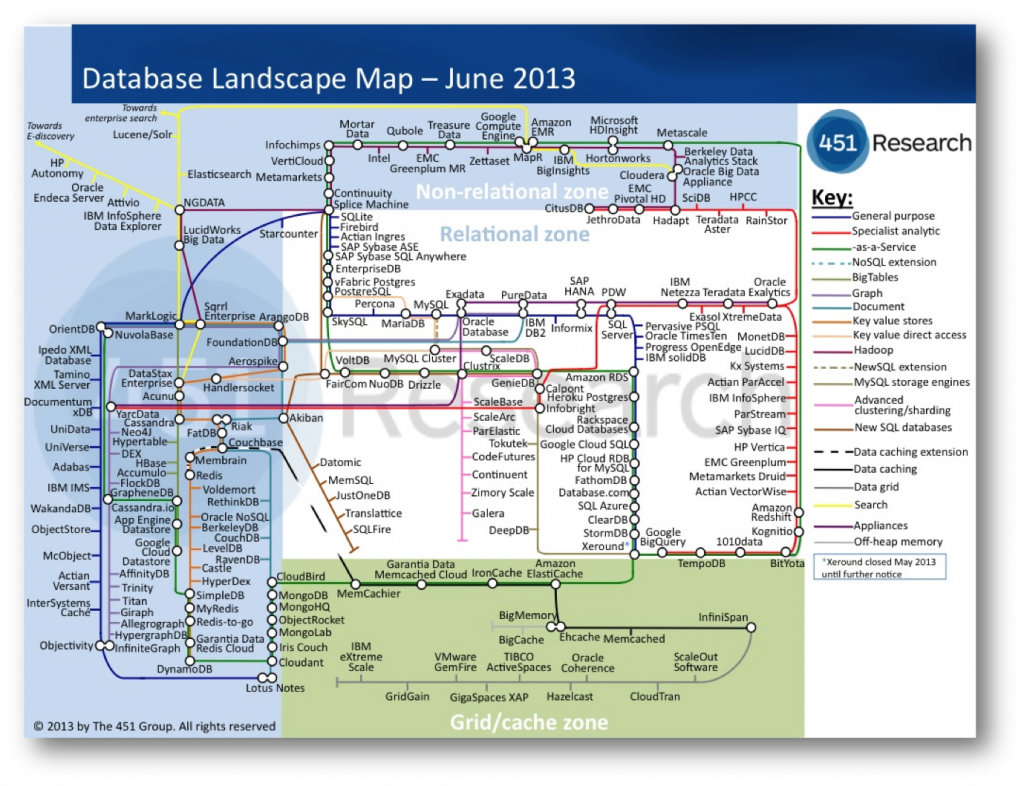

MongoDB recently secured $150m in funding. If you’re not sure how to place that figure, it is more than any database vendor has secured in a single funding round, ever! The company was reportedly valued at $1.2 billion, a huge amount considering the total annual revenue in the NoSQL market was only $542m in 2012. News reports of new databases appear on what feels like a weekly basis. The latest database landscape cites more than two hundred products, and only really scratches the surface.

So this is an interesting time for the database world and there are some inevitable questions arising from where these change have come from. Certainly it would seem that the database field has not been serving us, the customers, sufficiently well. If it were these new products would be unlikely to exist. Behind this likely sits a more fundamental change in our needs. The internet has been an obvious contributory factor but there is likely more to this change than simply the need to scale. Joe Hellerstein, something of a guru in the database field, called this out more than a decade ago, and his words make interesting reading today with provocative, if informal, comments like:

“Databases are commoditized and cornered … to slow-moving, evolving, structure-intensive apps that require schema evolution”

The 90s classic The Innovators Dilemma also resonates. In it Christensen describes a cycle of replacement, arising from the blinkered following of customer’s contemporaneous needs.

The companies that innovate, in the Christiansen sense, tend to operate in smaller, niche markets that are less well served; markets that tolerate the rough edges that new products inevitably have. These innovations often pre-empt changes in the mainstream. If and when shifts occur the mainstream incumbents can be left lacking the expertise they need to compete.

Amongst other examples he cites analysis of hard disk production in the 80s where Seagate came to dominate the hard disk market when it shifted from 8” to the smaller 5.25” format. The manufactures of the larger 8″ format missed a trick. Preoccupied with increasing the performance of the 8” format, preferred by mainframe customers, they could not complete when the market changed. They had listened to their customers, and followed the most profitable of sales, but in doing so they missed what, in hindsight, seems like an obvious shift , but likely seemed less clear cut at the time.

As computers got smaller. Seagate, having cut their teeth in 5.25” technology for the best part of a decade selling to the then niche desktop computer market, cleaned up.

So I find myself wondering if the database world with its flourishing, open source wedded NoSQL movement may be following this pattern?

Whist it seems unlikely that the latest bedroom-crafted distributed hash table will oust the likes of Microsoft, Oracle and IBM that does not mean that the pattern will not play out in one form or other. Enterprise customers are not demanding change in the same way the internet companies are and with enterprise customers making up the lion’s share of the database gravy train the money is not going anywhere fast. The interesting question is whether there is something genuinely disruptive here? Something that could leave the incumbent database vendors lacking.

One of the key problems that many of the NoSQL (and NewSQL) vendors face is the absence of the rigorous heritage of the traditional database products. Databases are complicated software, the most complex libraries you will likely program with on a day-to-day basis. Most recent relational database contenders; Aster Data, ParAccel, Greenplum, Netezza, Vertica – to name just a few of many – have been Postgres forks. This being necessary to get a leg up the steep development curve required to build a fully functional DBMS.

NoSQLs; Couchbase, Cassandra and Dynamo (Voldemort) are all under 200k lines of code, a questionable metric I know, but it hints to the comparative simplicity of some of these solutions.

The NoSQLs are, in many ways, slightly crappy products. A quick scour of the Internet will find you numerous articles citing the crappyness of Mongodb. It’s strange and weird failure scenarios, overly simplistic delegation of IO to the operating system and (the now changed) default policy to not put any of your data on disk synchronously. The MongoDB is Webscale clip plays on this to hilarious ends.

Yet the market is surprisingly tolerant, at least for the meanwhile. The Christiensen explanation would be that niche markets are inherently tolerant of slightly crappy products because there is simply less to compare them to. The first Walkman, personal computer, mobile phone were all actually pretty crappy. They were however also very cool. They did stuff that the mainstream did not, opening markets that were previously unavailable. Few large corporates manage to do this (Job’s Apple being the obvious counter).

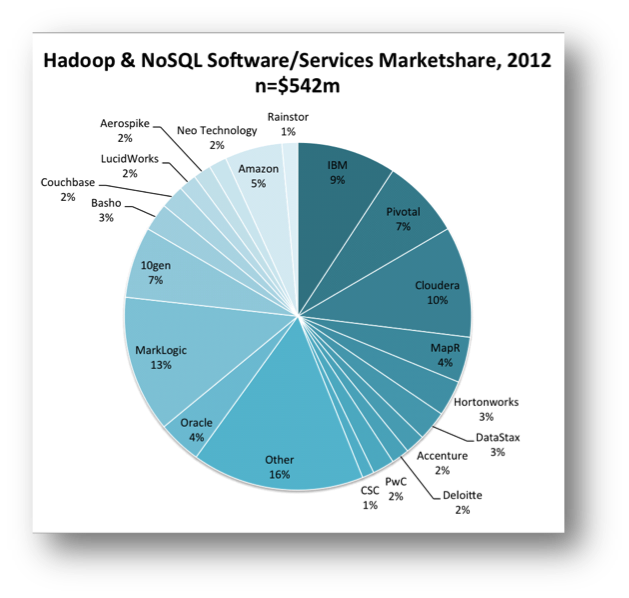

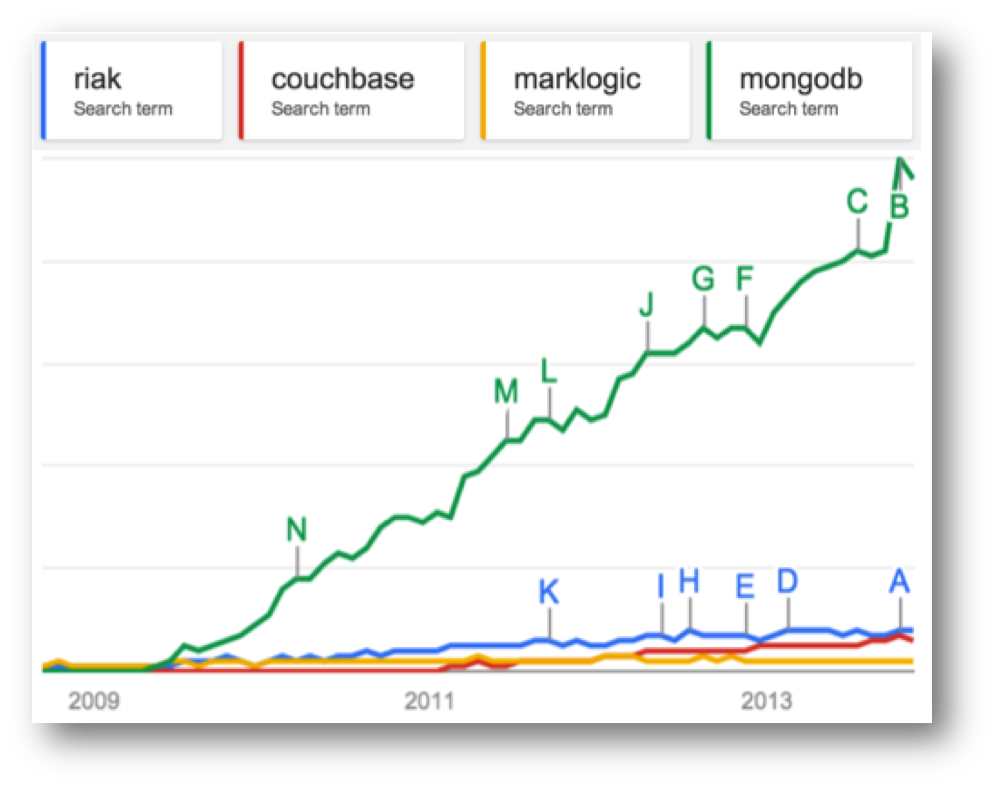

Despite being a little rough round the edges, the use of Mongo has gone viral . The recent valuation of 1.2 billion evidences this success. Marklogic, a far more mature, solid (and expensive) NoSQL with a heritage in XML document storage, makes for an interesting comparison. Marklogic has also had commercial success (Wikibon putting Marklogic as the dominant player in the NoSQL space – see graphic) but has nothing like the community penetration of Mongo. Google Trends puts this well (lower graphic) and VC money in this day and age often follows the horde rather than the balance sheet.

Part of this success can be attributed to the open-source pricing model. Open source makes technology more accessible, coming in under the corporate radar. People are more tolerant of something that is free. Finally and importantly it has a laid back dressing of web-cool that captivates young engineering minds… although that might change if a Oracle-esque corporate sales machine kicks in.

So if NoSQL is simply about scaling then why Mongo’s recent success? Mongo has a fraction of the scalability of Aerospike, Riak or Dynamo. This is because it has chosen the less scalable, but far more user friendly free-form query API which puts it far closer to the traditional OLTP space than other products in the NoSQL space.

Possibly more importantly it panders to the iterative, poly-structured world of today’s engineers and in doing so it is locking into a fundamental truth: the relational world doesn’t sit well with today’s development practices and the latest generation of programmers are less wedded to it than the last.

So are we witnessing an interesting niche field growing up or is this the prologue for a Christiensen-style shift of the mainstream?

Oracle took $11 billion of the $34 billion database market in 2012. The whole NoSQL market is a little over half a billion currently (2%), so it seems unlikely that Larry will be quaking in his platinum-coated deck shoes just yet. But to Christensen’s point, if the differentiation is both significant and sustained and the mainstream vendors do not keep up, that could spell change.

It is unclear to me, as it stands today, whether this path is really disruptive. I’ve discussed the signs of convergence before but there are also a number of technical approaches that are acting as differentiators: The use of shards and replicas are in many ways more advanced that those seen in relational systems. The application of schema on need provides an iterative, explorative approach to data-modelling and data-model evolution. More progressively No/NewSQL is moving beyond CAP to include lightweight transactional models that provide stronger consistency guarantees without all the baggage of serialisable two-phase commit.

So whether this is tangential or revolutionary remains to be seen. Intuitively it certainly seems unlikely that David will topple any of the Goliaths, in fact it seems more likely that the Goliaths with simply eat (buy) the Davids, but we should still see deeper changes than those evidenced by the current lip service paid by the big vendors. This is a good thing.

Big Data’s ill-defined promise of otherwise missed insight has done more for bolstering vendor sales figures than it has to derive value in many of the organisations that implement it.

That’s not to say that it is without successes, far from it. Empirical exploration of customer behaviours has changed the way we interact with customers and has in fact raised the bar for all online custom. But approaches like bolting a MR framework onto to an existing storage engine or adding a JSON column type smack more of feature-sheet bolstering then they do of true technical innovation.

Instead the big vendors should look to the world of today’s engineers: cheap commodity hardware, agile development, continuous integration / delivery, poly-structured data both on the wire and in programs and a general lack of tolerance for anything that removes control from the development process. Mongo’s success can certainly be attributed, at least in part, to this.

The relational world is however both deeply embedded and wholly trusted, and so it should be. Data is something that lasts for decades and holding long-lived data in a schemaless store will bring with it many additional challenges not seen today. This fact alone may see truly schemaless stores marginalised in the long run.

But the generational thing may be decisive. If decision makers in large companies have one thing in common it is their age. Having sat through decades of relational dominance we can cite a thousand sensible, logical, often unquestionable reasons why this dominance should continue. Generation Y however are simply less likely to see it the same way.

The Big Data Conundrum

Saturday, November 10th, 2012

I attended an interesting talk at JAX earlier this year by guy called Ian Plosker, from Riak, somewhat amusingly entitled ‘TheBigDataCon’ (worth a look by the way – the slides are good). Ian makes a little fun of all the current hype, joking that vendors seemed to be the only people actually monitising Big Data. I think we can’t help but be a little cynical of anything that has this much hype.

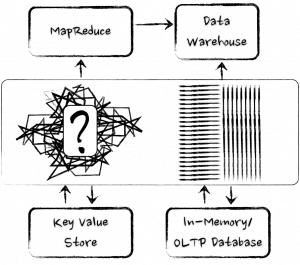

On another level the term has become overloaded. It has many definitions, Oracle for example talk about Big Data in a very different way to say MapR. It seems to broadly boil down to two angles though:

- The promise of greater insight using the huge amounts of data we produce

- A change in the technologies we use to crunch our way through the data we have (or expect to have)

Like any other commodity, the harder it is to extract, the more it costs. The aspirational, needle-in-a-haystack concept that drives much of the marketing paraphernalia is certainly real and should not be ignored. However the hype around the ‘hidden insight’ thing masks a more fundamental, and grounded point: the technology shift that facilitates all this.

There is a view that todays data is ‘big’ and that having big data means some form of MapReduce. Yet it is not size that really matters. Both relational and nosql camps can deal with the data volumes (and even, for the most part, the three Vs in one way or another). Ebay for example runs a 20PB+ database. Yahoo and Google both have larger MR clusters, but not that much larger. For most problems data volume alone is not enough to make a sensible technology choice (and I’d contest that any of the Vs were really enough either). As the academic world likes to keep reminding us (here and here) performance is not the reason to pick up a big data technology. There reason is that these new technologies embrace a very different approach to data analysis, particularly in the context of the whole ‘lifecycle’ of our data analysis work. Big Data technologies decouple us from some of the shackles that make big data problems hard. However, there is no free lunch and they come with some shackles of their own.

A core difference is the ability to define a schema at runtime, rather than upfront. That alone is a powerful, and game changing idea. Dave Campell put it well in his VLDB keynote when he says ‘ability to model data is much more of a gating factor than raw size, particularly when considering new forms of data’. Modelling data, getting it into a form we can interpret and understand can be a longwinded and painful task, and something we must do before we can do anything useful with it.

Our ability to model data is much more of a gating factor than raw size

Traditional databases push us to model our data before we store it. Big data solutions often leave their data in its natural form. A ‘virtual schema’ is bound at runtime. This concept of binding the schema ‘late’ is powerful. It allows the interpretation of the data to be changed at any time without having to change the physical format of the data on disk. Something that becomes increasingly important as the size of the dataset increases. The downside of not imposing a schema from the point of ingestion is that keeping old forms of data ‘current’ becomes an increasingly difficult task. That’s to say that the client is left with the problem of handling many data representations. Fine if the model is free text, tougher if the model has any real structure (explicit or implicit).

The concept of binding the schema ‘late’, with the data held in its natural form, is powerful.

Big Data technologies offer very different performance profiles to relational analytics tools. The lack of indexing and overarching structure means inserts are fast, making them suitable for high velocity systems and batch processing. The imperative interface and the absence of a schema, makes diverse, ad hoc analytics hard though. Instead they work best for specific, well-defined data operations (I often use the data enabled grid analogy). It is likely this that has driven Big Data leaders, Google, and more recently Hadoop, to add more database-like features to their products (Dremel/Magastore/Spanner providing SQL like interface and ACID semantics). Yet it’s much harder to optimise in a late-bound world, no big data solution today comes close to the raw performance of the top end analytics engines.

Most of today’s databases are hindered hugely by needing the schema to be defined upfront.

The last thing to consider is the cost of change. For simple data sets it is less apparent. Start joining data sets together though and it becomes a different ball game. Whist possible with Big Data technologies, it’s just going to cost more and managing the complexity with the absence of a schema becomes an increasingly uphill struggle. In this case, better to stick in the relational world (for now at least).

However most of today’s databases have the HUGE disadvantage that the schema needs to be defined, and the data understood, upfront. Great for simple, well defined business data, but if you’re searching free text, machine generated data or simply a hugely diverse data population (like the data that gets thrown around most big organisations) it’s simply not practical or maybe not possible to understand, and model, the data upfront. By applying the schema later in the cycle the cost of change, the availability of insight and the inherent feedback cycles can all be improved.

As for the future?

You’ll probably have noticed that every database vendor worth their salt now have some form or Big Data offering, be it bought in, ‘tacked on’ or genuinely integrated. Likewise the Big Data vendors are looking more and more like their relational counterparts, sprouting query languages, loose schemas, columnar storage, indexing, even elements of transactionality. The two camps are converging.

Many of new set of relational technologies look more like MapReduce than they do like System R (IBM’s original relational database). Yet the majority of the database community still seem to be lurking in the corner of the playground, wearing anoraks and murmuring (although these days the anoraks are made by Armani). They are a long way from penetrating the progressive Internet space. Joe Hellerstien’s words still ring true today.

The new cool kids of the database world are making their mark with technologies of the moment, backed with a hefty dose of academic acumen.

The future is likely to be one of convergence, and redirecting the database community is undoubtedly good. In fact possibly the most most useful things the NoSQL movement has done has been to give a well timed boot to the database world’s behind, reminding them that they need to listen to their consumers. They got stuck in a rut and the internet space wasn’t going to wait around. Convergence over some newly shared values that sit between the two camps is of course inevitable, and welcomed.

The database world got stuck in it’s ways and the internet space wasn’t going to wait around.

The evidence is quite plain already. There are a host of young (ish) upstart technologies hitting the space. The number of shared nothing analytics engines has significantly increased (Asterdata, Vertica, VoltDB, Exasol, ParAccel, Greenplum, Hana the list goes on) and the benchmarks they are extremely impressive. There are hybrid engines mixing MapReduce engines with smarter storage, routing and indexing strategies. Hadapt and Impala are good examples. The former particularly as it is the one that probably best personifies the blending of the two worlds.

These new upstart database technologies redefine the current mainstream with, not in spite of, the lessons of the past.

Finally there are some interesting one-stop-shop approaches. Holistic solutions that span dynamic schema provisioning and data access, all the way to presentation in a single package. Originating in the machine generated data space, Splunk (dominant) and Logscape (scalable), are the current leaders in this space and there is likely to be a lot more activity. For answering the what-if questions or assembling high level MI stacks these all inclusive solutions get the closest to answering the more insightful questions we have today.

Whether this ever breaks the strangle hold clenched by the oligopoly of key database players remains to be seen. Michael Stonebraker still rains disdain on the NoSQL world even today [see here]. He may be outspoken, he may come across like a bit of **** at times, but it is unlikely that he is wrong. The solutions of the future will not be the pure (and relatively simplistic) MapReduce of today. They will be blends that protect our data, even at scale. For me the new technologies coming from both camps are exciting as they redefine the current mainstream thinking with, not in spite of, the lessons of the past.

Related posts:

The Rebirth of the In-Memory Database

Sunday, August 14th, 2011

Trends in software tend to go in cycles. Ideas are reinvented with the wisdom of the past, reappearing youthful and rejuvenated in the context of a new era. Yet behind these evolving rhythms often lie the same fundamentals that have echoed through the software world since its formative years more than a quarter of a century ago. The fundamentals of software rarely change. When reinvention does arrive it comes from the context of the new era: the capabilities of our hardware and the types of problems we wish to solve. These are the variables that drive evolution.

After more than a quarter of a century of domination, the Internet era is changing our requirements and driving the reinvention of the traditional database. None of the fundamentals have changed of course; we just have more data, more users and, currently, a larger number of simpler, OLTP use-cases. As a result we’re more likely to forgo some degree of consistency to get what we want. Distribution is at the core of the technologies of the moment, with solutions architecting their way around the limitations of our hardware stack. But, almost in spite of this, hardware is changing and in some very significant ways. Terabyte memory architectures, solid-state drives and Phase-Change Memory are remoulding the hardware-landscape into one where address-spaces are both vast and durable.

Terabyte memory architectures, solid-state drives and Phase-Change Memory are remoulding the hardware-landscape into one where address-spaces are both vast and durable.

So my conjecture is this: whilst the disruption of late may have been lead by the ‘big-data’ driven, Internet behemoths, the next set of disruptive technologies may well come from OLAP space. Enterprise users’ need for fast analytical processing will drive the reinvention of in-memory databases: technologies that store data entirely within the address space, leveraging new physical storage mechanisms to provide far faster results to business queries whilst maintaining the degree of durability that we expect from traditional databases.

The argument for using in-memory solutions is simple: If data storage requirements can be constrained to a single address-space the complexity of the problem domain is dramatically reduced. The knowledge of any piece of data is microseconds, or even nanoseconds, away. There is no need to page information into and out of memory; it is all there at your fingertips, ready to be processed. Probably most dominant is the fact that the data structures used do not need to be optimised for disk. Disks being particularly tricky to design for due to the huge discrepency between their random and sequential performance.

Yet despite these advantages in-memory databases have had relatively limited market penetration. Oracle’s TimesTen is a good example, infiltrating only a limited number of specialist markets. This is likely due to the two fundamental issues with single machine, in-memory solutions. The lack of durability: what happens when you pull the plug and the ‘one more bit’ problem: what happens when your database becomes one bit larger than the memory on the on which it is running?

The last few years have seen the introduction of a group of distributed, in-memory products that improve on the standard in-memory database through the use of a Shared-Nothing architecture [13, 14]. Being distributed solves both the aforementioned problems: the ‘one more bit’ problem is solved by simply adding more machines, more partitions (shards) and implicitly more bits. Durability is also less of a concern as redundant copies of the data can be spread around the cluster making it far less sensitive to single machine failure. Data-caching products like Oracle Coherence have been doing this for some years. More recently we’re seeing fully blown ACID compliant software like the Stonebreaker-inspired VoltDB [3]: an in-memory, distributed database with both scalability and fault tolerance. SAP is also making significant inroads with Hana, their distributed in-memory database [5,6] (with one of the SAP founders, Hasso Plattner, explaining their vision in some detail in his book [7]). Finally Exasol has recently taken poll position in the TPC-H benchmarks with its lightning fast distributed in-memory database [16,17].

However the move to distribution comes with drawbacks: Like all Shared-Nothing solutions (including all the NoSQL ones) complex queries will always crosscut the partitioning strategy implying some form of distributed join. Cross-machine joins imply the shipping of data/keys across the network to facilitate the join’s computation. This is the Achilles Heal of the Shared Nothing architecture, although to be honest there are others. If complex query-patterns, with distributed joins are necessary, we’re thrown back down the road along which we came:- as with the case of the traditional database, we again need to mediate between different storage media – only this time the traditional disk is replaced by data in a different partition, on a different machine. This is alas somewhat akin to having remote data access again!

The point is that by distributing an in-memory database over a set of machines some imporntant problems are solved, but more are created. The simplest solution is to avoid the kind of queries that need cross-partition joins. This is the solution propagated by the NoSQL movement. Another method is to use a technique like the Connected Replication Pattern [9] to avoid key shipping. However ultimately there may be no need to do either.

Whilst increases in clock speed may have all but petered out, transistor density continues to increase exponentially in accordance with Moore’s Law. Processor power, memory and network speeds all show significant gains [1]. By comparison the data storage requirements for most enterprise databases are relatively small. 82% of databases were under 1TB in one relatively recent study [8] and increase relatively slowly at around 10% per annum [2], significantly less than rate of hardware progression. At the time of writing £20,000 will buy you a 40-core machine with 512GB of RAM and a 10GE network interface. The next few years should see machines with upward of a hundred cores, terabytes of RAM and 100GE connectivity in the ‘commodity space’. The implication is a world where the increasing capability of individual hardware units could overtake our need for physical resources, at least in OLAP and enterprise markets where databases are rarely more than a few terabytes.

However Moore’s Law is not the only catalyst of change. Solid-state media is encroaching on the performance of RAM. Fusion IO [10] – a performance leading SSD technology that uses PCI interface – supports read latency in the tens of microseconds and around 5Gb/s of throughput (although this is limited to about 1Gb/s from a single thread [11]). That’s still a couple of orders of magnitude slower than RAM but an order of magnitude faster than disk for sequential read and significantly more than that for random access [15]. Phase Change Memory [12], with an anticipated arrival date in 2015, is predicted to scrape another order of magnitude from this difference.

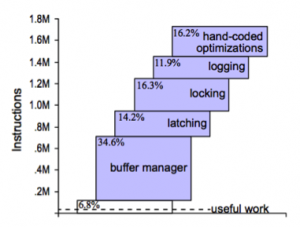

The problem is that current database technologies can’t take advantage of these fast media. A recent study by HP shows that, whilst FusionIO will provide up to three orders of magnitude better performance compared to disk for random read operations, performance on the standard TPC-H benchmark showed no visible improvement [15] (although other studies have shown marginal improvements [18]).

So what does all this mean? Firstly, it seems plausible that, ultimately, in-memory databases will replace disk-resident ones as the de facto standard. The advantages of knowing that all data is in memory are hard to understate. The need for intermediate results, and the temporary spaces to compose them, is hugely reduced as there is simply no need to mediate data between RAM and disk (or other media). Distribution will of course remain for large storage requirements, particularly in the short term, but the performance of a single address will likely prove compulsive to many enterprise users in the coming years. This has always been the sales pitch for Oracle’s Times Ten, but the key difference being its more general used as a bolt-on to an existing Oracle implementation. The next generation of solutions should be in-memory and stand-alone.

If this new class of solution does arrive it should also differentiate itself from its in-memory predesessors by the way it utilizes recent developments in fast-connected media such as FusionIO and Phase Change Memory (PCM), applying them to solve those two primary issues: ‘durability’ and the ‘one more bit’ problem. This is more than simply taking existing in-memory databases and adding flash-cards. Secondary storage may still be one or two orders of magnitude slower than RAM, but the traditional approach of paging data to and from disk via some in-memory user-space is far too inefficient and needs to be addressed. By re-architecting to take into consideration the different physical properties of solid-state media, in particular the hugely better performance for random access, we should see a different class of solution that is far more performant. This middle ground lies where data is primarily in memory and engineered to be durable through write-through and overflow into solid-state media. As technologies like PCM reduce the performance discrepancies between RAM and persistent storage this middle-ground approach will likely become more and more fruitful, maybe even bring with it a new era of database architecture.

Of course this is largely conjecture, but looking to the future it seems inevitable that the spinning magnetic disks we use today will seem as arcane to the engineer of the future as saving data to cassette seems today. Solid-state storage must ultimately prevail.

In memory databases are simply much faster. Hardware has progressed to the point that the typical enterprise database will fit in the memory of a well specified, commodity machine. With solid-state storage mitigating some of the previously prohibitive risks, in-memory (or at least single address-space) databases should become an increasingly compulsive option for enterprise users. The ease of selling a two order of magnitude performance improvement to an enterprise boardroom is self-evident and it is this that should drive the reinvention of this technology.

References:

[1]http://blogs.cisco.com/datacenter/networking_delivering_more_by_exceeding_the_law_of_moore/

[2] http://repo.solutionbeacon.net/DB-Growth-Problems-and-Solutions-v01-revised.pdf

[3] http://voltdb.com/

[4] http://bytesizebio.net/index.php/2011/07/02/cafa-update/

[5] http://www.enterpriseirregulars.com/39209/the-real-potential-impact-of-sap-hana/

[6] http://www.nbr.co.nz/article/memory-databases-next-big-thing-ck-96642

[7] http://www.sap.com/platform/pdf/In-Memory%20Data%20Management.pdf

[8] http://www.b-eye-network.com/blogs/madsen/archives/2009/04/size_of_data_wa.php

[9] http://www.benstopford.com/2011/01/27/beyond-the-data-grid-building-a-normalised-data-store-using-coherence/

[10] http://www.fusionio.com/

[11] http://www.pdsi-scidac.org/events/PDSW10/resources/papers/master.pdf

[12] http://www.theregister.co.uk/2011/06/30/ibm_research_phase_change_memory/

[13] http://en.wikipedia.org/wiki/Shared_nothing_architecture

[14] http://www.benstopford.com/2009/12/06/are-databases-a-thing-of-the-past/

[15] http://h20195.www2.hp.com/v2/GetPDF.aspx/4AA0-0248ENW.pdf

[16] http://www.exasol.com/en/home.html

[17] http://www.tpc.org/tpch/results/tpch_perf_results.asp

[18] http://www.mysqlperformanceblog.com/2009/05/01/raid-vs-ssd-vs-fusionio/

See Also:

A Case for Flash Memory SSD in Enterprise Database Applications: http://www.cs.arizona.edu/~bkmoon/papers/sigmod08ssd.pdf

Non-linear growth in biological databases: http://bytesizebio.net/index.php/2011/07/02/cafa-update/

Is the Traditional Database a Thing of the Past?

Sunday, December 6th, 2009

The Internet has brought with it a new type of data source. Large distributed repositories that cope with the extreme scale necessitated by millions of uses. Traditional concepts of Consistency, Normalisation, Transactionality and Referential Integrity are increasingly neglected as engineers relax their application constraints to leverage the eventual consistency of distributed data stores.

But what does this mean for the traditional enterprise application?

Whilst most enterprises do not need to vend data on the scale of Google, Twitter or Amazon they are none the less becoming more data hungry. Increasingly traditional databases cannot provide the bandwidth, latency or processing power they need.

Most current database products can trace their lineage back to IBM’s System R [18], developed back in the 1970s. Both software and hardware practices have evolved significantly since then, but the architecture of core database systems has seen comparatively little change [12]. There is good reason for this; database technology is mature, reliable and well understood. Only recently has its dominance started to falter in application spaces requiring extreme scale (often characterised by the physical constraints of a single machine and network connection becoming prohibitive). This has lead to the emergence of a number of diverging technologies in the enterprise application space. Some have evolved from the application framework arena, some from super-computing, others from the database world itself. This article focuses on some of most influential: Clustering, Shared Nothing Architectures, Column Orientation and Distributed Caching. These technologies have changed the data storage landscape: It has now become necessary to understand and select the type of database you need. No one product can do it all.

Clustering: The Distributed Data Store

The onset of Moore’s Law [14] has not only affected processor speed but also disk size, speed and memory capacity. Whist this should have lessened the need for distributed applications bus and interconnect speeds have increased by a comparatively small amount [16]. Thus, whilst processing power of a single machine has increased dramatically, our ability to present data to these processors has not kept up with this increasing processor speed. Thus single box architectures become bandwidth limited and increasingly engineers look to distributed solutions so that overall bandwidth is summed across a cluster of machines.

Clustering is crucial to modern systems as it both provides a route out of the scale-up [17] world whilst also allowing high availability to be achieved though real time data redundancy. In general terms it is the mechanism for joining a collection of computers together so they approximate a single entity. The challenges are far and wide and go beyond the scope of this article (if you are interested they include consensus problems [5], ordering problems [6], concurrency [7]). Clustering, in some form, is fundamental to any scale-out system that requires shared state, where load balanced architectures are insufficient.

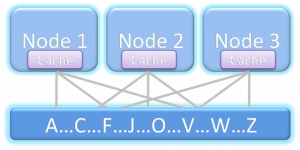

The downside of clustering is that it pushes the fundamental problem of the hardware architecture; access to shared memory, into the software domain. Not only must software handle the federation of hardware but these disparate machines are connected via significantly slower interconnects then their scale-up counterparts (100μs being typical for a wire call vs 100ns for local memory access). This represents the fundamental problem of distributed systems. Yet clustered datastores represent probably the greatest challenge of all as they are little more than shared state ( for example a clustered shared disk architecture as shown in figure 1).

Figure 1. A shared disk architecture. All nodes have access to all data.

There is unfortunately no general solution for efficiently sharing state across a distributed architecture. The engineer must factor the cost of sharing state into the design of the system rather than treating it as a black box with fixed performance. This makes the transition from single machine to clustered data store difficult. Many products attempt general solutions to this problem, and with some success. For example Oracle Exadata [8] comes close to replicating a single large machine in a clustered environment through some clever use of ultra-fast Infiniband [25] network and pre-filtering technology at the disk head. Whilst these technologies reduce the cost of a wire call that cost still remains orders of magnitude larger than accessing local memory. Ultimately these costs impede scalability unless significant care is taken in the design process.

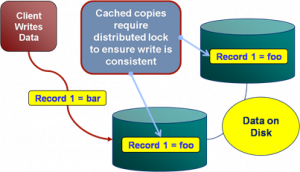

To better understand the challenges of shared memory in a clustered database consider the simple case of writes. As writes can be routed to any machine in the cluster a machine must obtain the appropriate lock, usually from another machine (See figure 2). Such protocols that require lock management over the network tend to scale as On although this challenge is dependent on the architecture used by the DBMS. This is discussed further in [15].

Figure 2. The distributed locking problem inherent in distributed data stores that replicate data.

Shared Nothing Architectures

One alternative is to change the architecture to remove the need for block shipping or distributed locking. This can be achieved by partitioning data over a grid, a method first suggested by Dewitt et al in the Gamma Database [21] and popularised by the term Sharding [9] in the database community. This model is extended by partitioning both data and the responsibility for processing it to produce what is know as Shared Nothing Architectures [19]. These limit the need for distributed locking by federating the architecture into discrete, encapsulated units that work autonomously. It is this focus on self sufficient leaf nodes that drives the scalability.

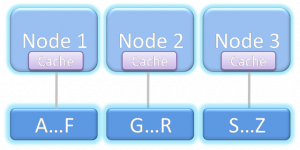

Because a Shared Nothing Architecture involves a physical partitioning of resources, processing, memory and disk become dedicated to a certain sub-section of the data set (the local partition). Thus each process has dedicated resources and is autonomous with respect to its data subset (see figure 3). It is this autonomous partitioning that allows such stores to scale linearly as hardware is added. Automaticity reduces the need for coordination between machines (particularly with respect to locks) when compared to the shared memory architecture shown in Figure 1.

Figure 3. A Shared Nothing Architecture. Nodes only have access to data associated with that node.

Shared Nothing Architectures however come at a price. The partitioning model breaks down when queries require intermediary results to be shipped between machines, particularly where those intermediary results will not form part of the final result. Examples include joins between ‘Fact’ [10] tables (where the join keys must be moved from one machine to another), multidimensional aggregations such as multi-dimensional risk calculations (i.e. the OLAP domain [22]), or transactional writes that span the current partitioning strategy.

Fortunately, many modern Use Cases have little requirement for complex joins that span large data sets because the bulk of queries have a common attribute that can be used to ensure they all hit the same shard of the database (and hence the query can be handled by a single node). For example access to data in an online banking application will naturally group by the user’s identifier. So long as partitioning uses the user’s identifier queries will scale well. The counter examples require complex joins that bring together large data sets that cannot be collocated across the distributed environment. Extending our banking example, listing other user’s accounts that can be paid into would mean joining across the partitioning key. This requires either key shipping or a two part query (get the users details then go back for the payable account).

Fortunately such Use Cases can generally be worked around simply (usually by doing two or multistage queries) and Shared Nothing systems leverage this fact but the work arounds require effort from the application developer and as such should be the exception from the norm. If your Use Case includes crosscutting or ad hoc joins that do not lend themselves to a clean partitioning strategy then Shared Nothing solutions are not the ones to favour, better to stick to a single machine solution that avoids distributed state.

Column-Oriented Storage

Commercial column oriented databases have been around for fifteen years [24] but have only become mainstream in the last few years. This can be attributed to the technologies natural maturation as well as the increasing data needs of average users making column orientated technologies increasingly attractive.

Column orientation changes the way data is physically ordered on disk and its repercussions on performance are fairly extensive when compared to row orientation. Of the technologies discussed here the trade-offs between column/row approaches are probably the hardest to understand fully. A precis of the issues are given below but a fuller treatment can be found here [11].

By storing data in columns certain operations can be optimised in several ways not available to row stores. Directed queries for single column values or queries comparing column values are naturally optimised in the columnar model as data blocks containing a column’s data are held contiguously on disk. Consider a simple query that sums integer values in a column: A row based store would need to read all rows from disk to memory before performing the summation of just one column. The column based approach however only need extract the data for that column. If there are 20 columns in the table only ~1/20th as much data must be read in the column model.

In addition to this more precise retrieval for single column queries, holding data as columns facilitates data compression in a way that cannot be replicated in a row based store. Columns tend to contain repeating elements, particularly when cardinality is low. As this column data is stored contiguously on disk the opportunity for compression is thus hugely increased. This reduces the amount of data that needs to be stored, and hence that which must be moved across the network.

There are of course downsides to the column oriented model, the most notable being slow inserts when compared to row based alternatives. In column stores a single ‘row’ is actually spread across different parts of the disk (i.e. one section per column). Writing a single row thus involves the mutation of separate blocks for each column the row contains, with each incurring a separate I/O operation. The row based approach, by comparison, writes the entire row’s data as a contiguous section in a single I/O.

The problem with returning large numbers of columns is analogous. Each column in the result set must be ‘sewn’ back together (known as tuple construction). In the extreme case of returning a single row of many columns the cost would be one I/O per column as opposed to a single I/O for the row based approach. A full treatment of columnar stores can be found in [11].

Distributed In Memory Storage

Over the last thirty five years processor speeds have increased dramatically, as have memory sizes and disk availability. But the change in bandwidth/latency between disk and main memory has been less dramatic [16]. Distributed caches leverage this fact by relying solely on memory access. Traditionally caches are primed or lazily load a subset of the application’s data providing fast and scalable environment for a well known data subset (due to the size limitations of memory based storage). However, increasingly, caching technologies are branching into the realm of the traditional database by offering advanced querying functionality, indexing and fault tolerance. Some even have transaction management. They generally utilise shared nothing architectures but with the absence of disk persistence making them faster than comparable disk based technologies. They reside in the world of objects rather the relational form and generally lack the benefits of ACID [23], most notably the lack of durability (although fault tolerance is often included making them insensitive at least to single machine failure). These factors change the contract the application has with the data store, pushing transactionality into the realm of the user with the recompense of increased performance. This makes their use as a primary store a relatively niche affair with only a small user base willing to either forgo ACID qualities completely or accept the cost of managing them themselves.

This lack of ACID means caching technologies are generally used as a performance enabler allowing users to disassociate critical data access requirements from a disk based, transactional store. This cache-aside model really complements rather than competes with traditional database technologies. As an example distributed caching is often used in conjunction with large compute grids to provide the compute nodes with the high bandwidth access to the data they need. If the data set is well known, and loaded from a database, then there is no requirement for consistency checks. Their existence in a DBMS guarantees Consistency and Durability by proxy.

Distributed caching is different to the other technologies cited here in that it often augments data architecture. This default Use Case is simplest and provides significant gains if bandwidth requirements are imperative. However there is an emerging, more advanced, application where the data-fabric is used to collocate data and processing. In many ways this is akin to the evolution of database systems as processing units with storage side functions such as stored procedures and triggers. Data-fabrics take this paradigm and apply it in traditional programming languages such as Java deployed in a distributed environment. This creates a unique programming environment that mingles storage, processing and distributed computing blurring the line between the traditional application and data layers. One vendor, Gigaspaces [20], now actively markets itself as the scale out application server. Others like Oracle Coherence are pushing more server side functionality that facilitates collocation of data and processing.

However such usage patterns come at the inevitable price: That of increased complexity which is always associated with applications that utilise distributed, shared state.

Conclusions

For the majority of enterprise users the single node database will likely remain the de facto standard for data storage despite its limitations. This entrenched popularity is unsurprising considering the broad range of Use Cases that the traditional technology stack will facilitate. Where this is sufficient, users have little reason to change.

However the technologies discussed in this article pander to markets seeking alternatives that perform at the extremes of scalability, throughput and latency. Such technologies operate at a lower level of abstraction than that offered by most off-the-shelf, shrink-wrapped products. This makes the programming domain tougher to navigate and more sensitive to error.

Any distributed data technology simply requires extra thought throughout the implementation as worst case scenarios are far graver than their single machine equivalents (think joining where the join keys are located on different machines). Experience in this industry shows there is still a significant design and development cost associated with this additional level of complexity when compared to traditional database products operating at a higher level of abstraction. Choosing one of these solutions requires sound justification for this additional cost, normally through a clear requirement for scalability beyond a single machine.

So how do you determine if this additional complexity is worth the expense?

There is no simple answer to this. A judgement call must be made based on an understanding of what the different technologies have to offer. To broadly summarise: Column orientation provides an architectural change that facilitates Data Warehouse workloads but requires writes to be batched making the technology unsuitable for OLTP workloads. Shared Nothing provides the possibility of massive scalability if the data distributions and workloads can be partitioned affectively. The choice of memory or disk based solutions can be determined by evaluating the system’s requirements for storage vs. latency. In memory solutions will only hold small datasets (under a TB) but can vend this data in massive volume at low latency. Disk based versions extend storage hugely but latencies can be orders of magnitude slower.

So could these technologies change the way we treat data in the enterprise? Until interconnect speed catches up with other hardware metrics more ‘extreme’ users have little choice but to embrace the distributed world. The Googles and Facebooks of the world have made these progressions through necessity; their Use Cases hugely exceeding the specifications of any scale up architecture. The enterprise application space however still largely has a choice. Scale-up solutions are significantly simpler to manage and far more flexible in terms of the Use Cases it can efficiently support. However, increasingly, large organisations need the more scalability to facilitate large compute driven workloads, be it centralised data repositories, complex data aggregation tasks such as risk calculators or the vending of data to large compute grids. For these users these progressive technologies open the doors to a scale of application not previously achievable.

References:

[1] http://en.wikipedia.org/wiki/Disruptive_technologies

[2] http://couchdb.apache.org/docs/overview.html

[3] http://labs.google.com/papers/bigtable-osdi06.pdf

[4] http://www.julianbrowne.com/article/viewer/brewers-cap-theorem

[5] http://en.wikipedia.org/wiki/Consensus_(computer_science)

[6] http://en.wikipedia.org/wiki/Logical_clock

[7] http://en.wikipedia.org/wiki/Distributed_concurrency_control

[8] http://www.oracle.com/database/exadata.html

[9] http://en.wikipedia.org/wiki/Shard_%28database_architecture%29

[10] http://en.wikipedia.org/wiki/Fact_table

[11] http://cs-www.cs.yale.edu/homes/dna/papers/abadiphd.pdf

[12] http://www.vldb.org/conf/2007/papers/industrial/p1150-stonebraker.pdf

[13] http://db.cs.yale.edu/hstore/

[14] http://en.wikipedia.org/wiki/Moore’s_law

[15] http://www.benstopford.com/2009/11/24/understanding-the-shared-nothing-architecture/

[16]http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.115.7415&rep=rep1&type=pdf

[17] http://en.wikipedia.org/wiki/Scalability#Scale_vertically_.28scale_up.29

[18] http://en.wikipedia.org/wiki/IBM_System_R

[19] http://en.wikipedia.org/wiki/Shared_nothing_architecture

[20] http://www.gigaspaces.com

[21] “The Gamma Database Machine Project”, Dewitt et al. IEEE Transactions on Knowledge and Data Transfer, March 1990. http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.113.6798&rep=rep1&type=pdf

[22] http://en.wikipedia.org/wiki/Olap

[23] http://en.wikipedia.org/wiki/ACID

[24]http://www.sybase.com/files/White_Papers/Sybase-IQ-Competitive-Assessment-070209-WP.pdf

[25] http://en.wikipedia.org/wiki/InfiniBand